Hi all!

Today is the first release of a group of plugins I have been working on to help organize and layout our substance designer graph networks. In conjunction with this, comes the release of bwTools, a Substance Designer plugin consisting of the tools I have been working on and will provide a platform for me to share any future work. As a bonus, my previous optimize graph plugin is bundled with it. This post will serve as documentation for that, so for an overview see links below.

You can find an overview video here:

https://www.youtube.com/watch?v=4Ckh0mgwYcA

store page:

https://www.artstation.com/benwilson/store/ewNd/bwtools-substance-designer-plugin

Please contact me for support through artstation or email!

Release Notes:

Version 1.3

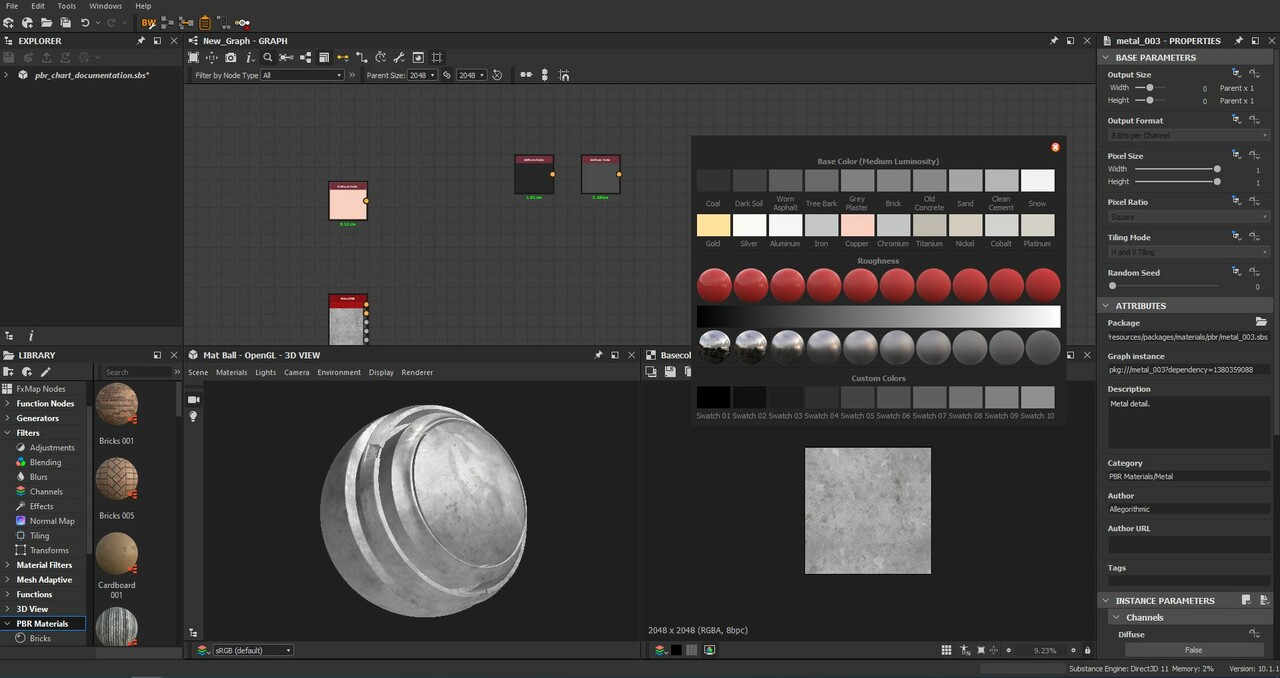

- New plugin added : pbr color chart

- A convenient pbr color chart built directly into designer

- Based on DONTNOD unreal engine 4 pbr values

- The color chart remains on top of Designer to make color picking easy

- Color swatches are select-able, drag-able and hides all the UI to allow easy comparison with your texture

- Supports up to 10 custom swatches

Version 1.2

- Fixed bug where some people were not able to load the plugin

Version 1.1

- Updated plugin to Substance Designer 2020.1.1 onwards. This update is not backwards compatible and will not work with Designer 2019!

- Fixed tooltips on toolbar icons

================================================

Installation

If you have a previous version installed, you need to delete the old bwTools folder inside C:\Users\Ben\Documents\Allegorithmic\Substance Designer\python\sduserplugins and restart designer.

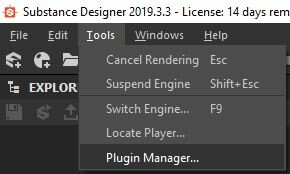

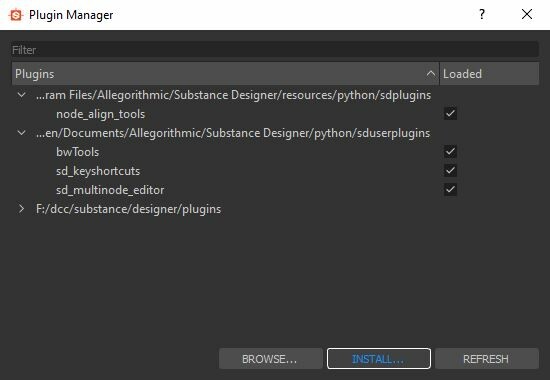

Open Substance Designer and navigate to Tools > Plugin Manager...

Click Install at the bottom

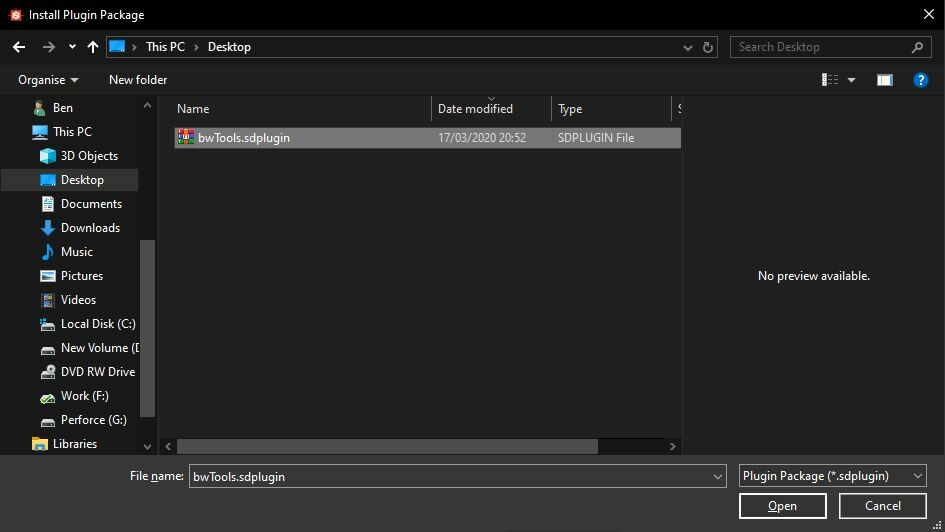

Navigate to your bwTools.sdplugin file and click open

================================================

================================================

bwTools

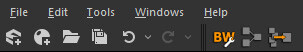

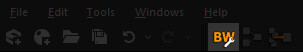

bwTools consists of 2 parts. A toolbar at the top of the Substance Designer application and the various plugins which make up the tools.

All settings for individual plugins currently installed can be found in the settings window here.

================================================

================================================

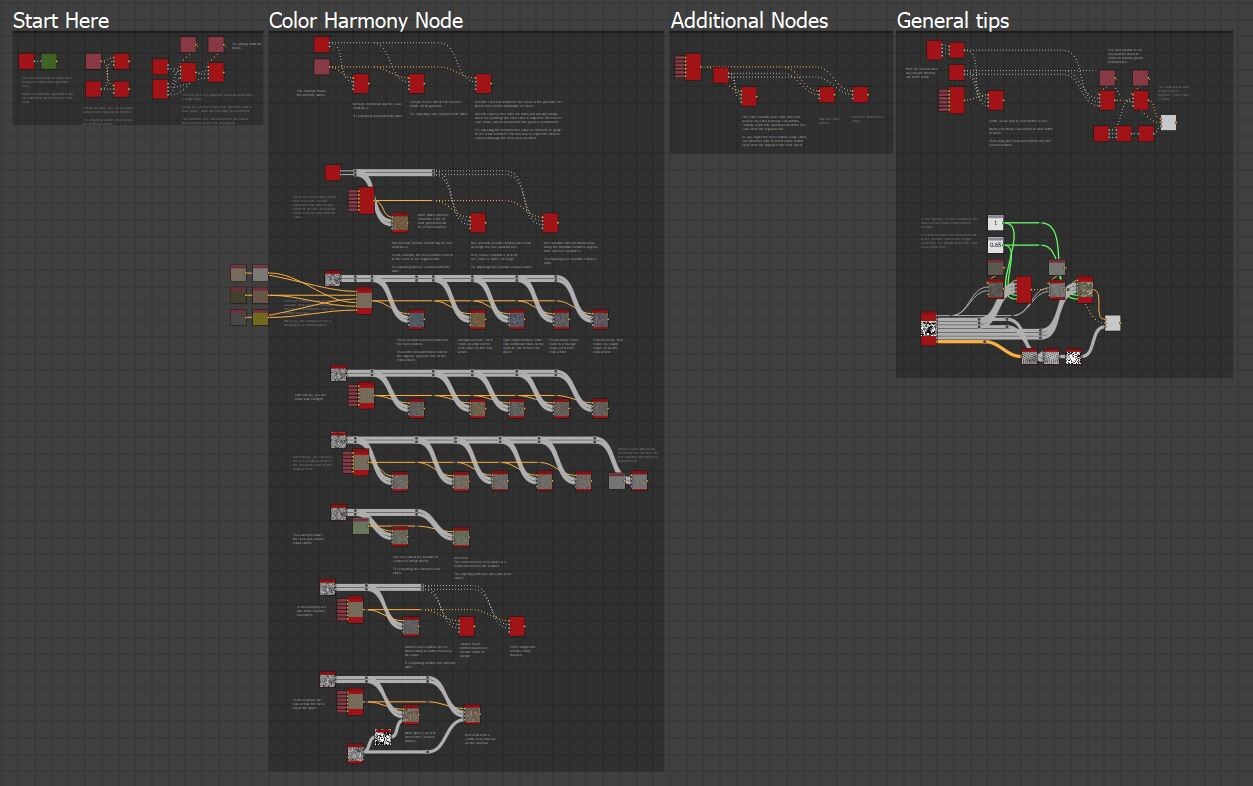

Layout Graph

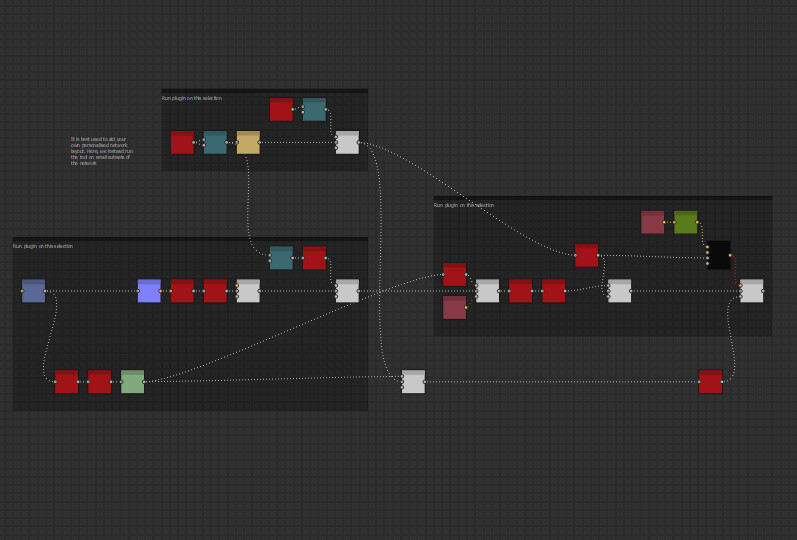

This tool is designed to help speed up the laborious task of neatly arranging your nodes. Is it best used as a helper tool when laying out your graph to your personal style and to make the most of it, we must understand how it wants to layout your node selection.

Node placement behavior

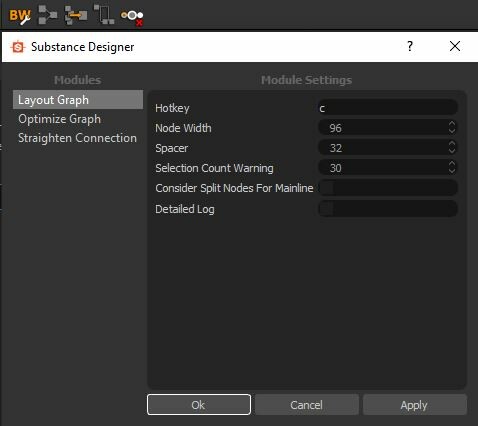

Nodes are always placed behind their outputs and always inline with the one which produces the longest straight line.

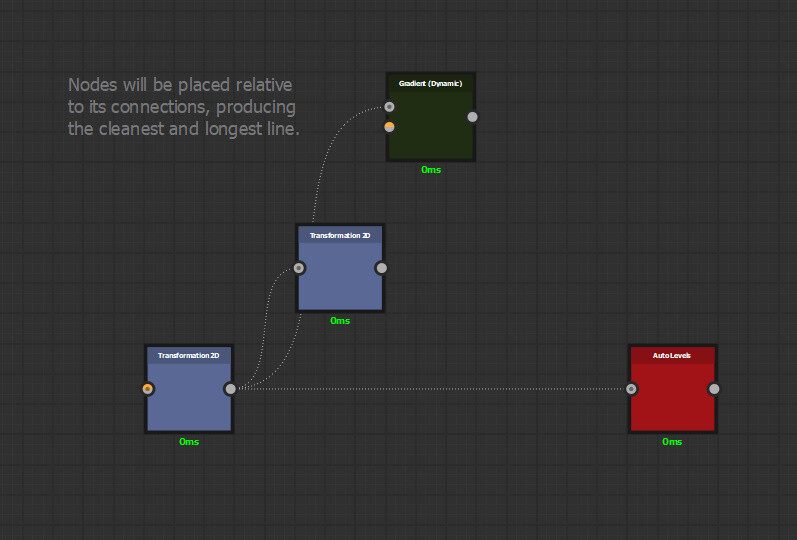

Nodes have a concept of height, which means they will correctly stack on each other.

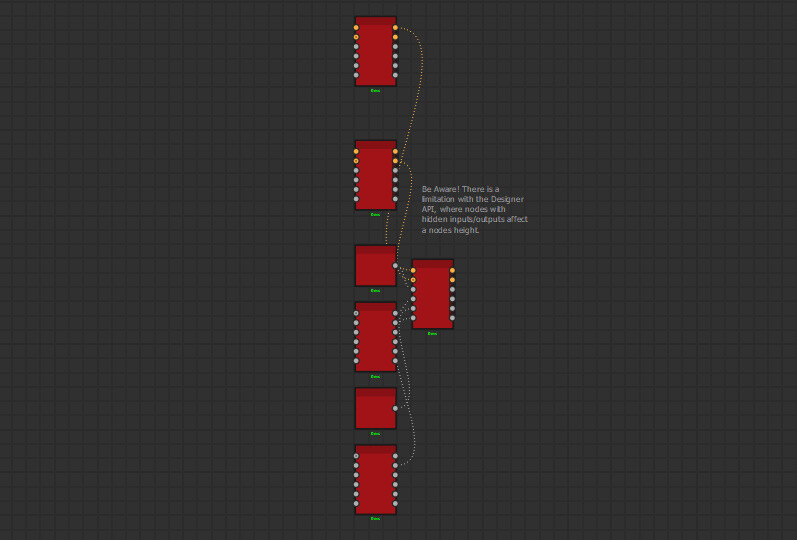

However, be aware that due to a limitation with the Substance Designer API, the plugin assumes all inputs/outputs are visible. In the example below, the top two nodes have many hidden inputs/outputs, leaving artificial gaps.

Nodes which start a chain are called root nodes. These are left untouched and all nodes input into it will arrange accordingly. Therefore, it is important to provide enough space for the network to expand if there are multiple root nodes.

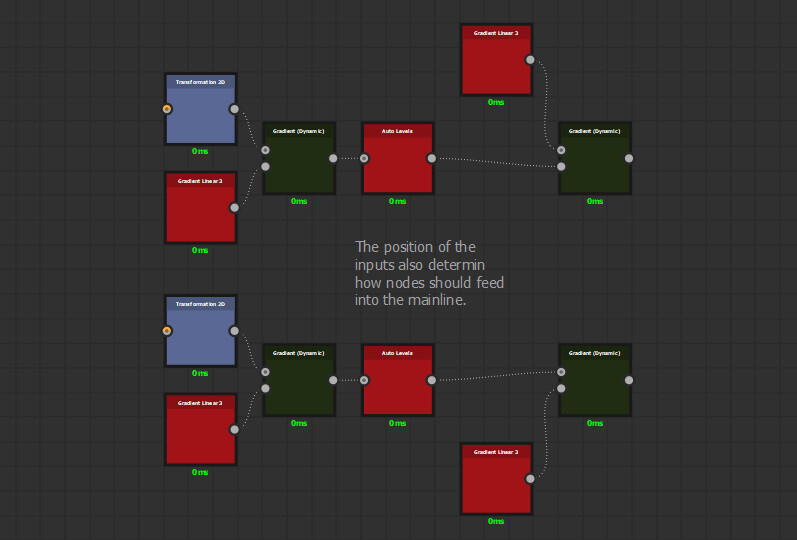

The Mainline Concept

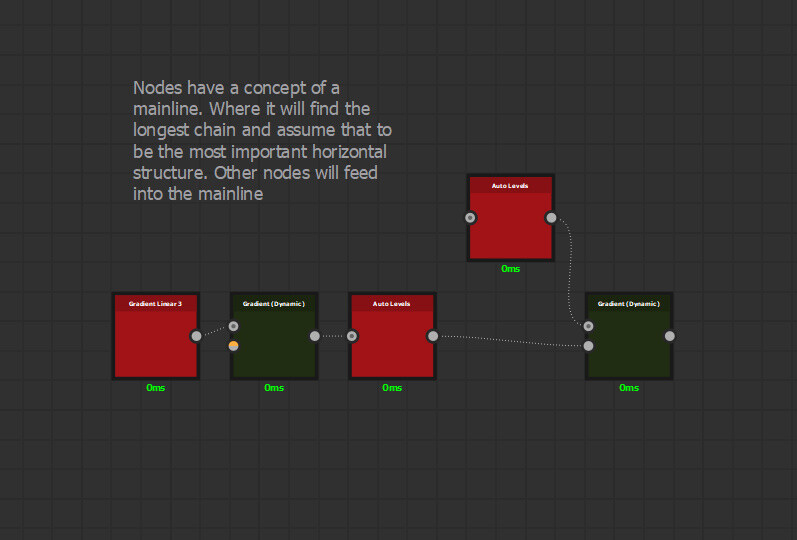

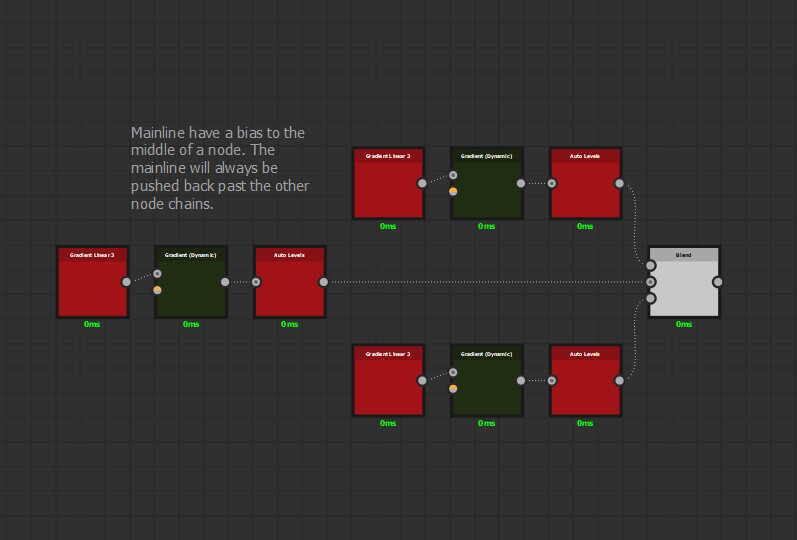

The tool wants to find a mainline through your selection and provide space for other parts of the network to feed into it. It typically assumes the network with the longest chain is the mainline.

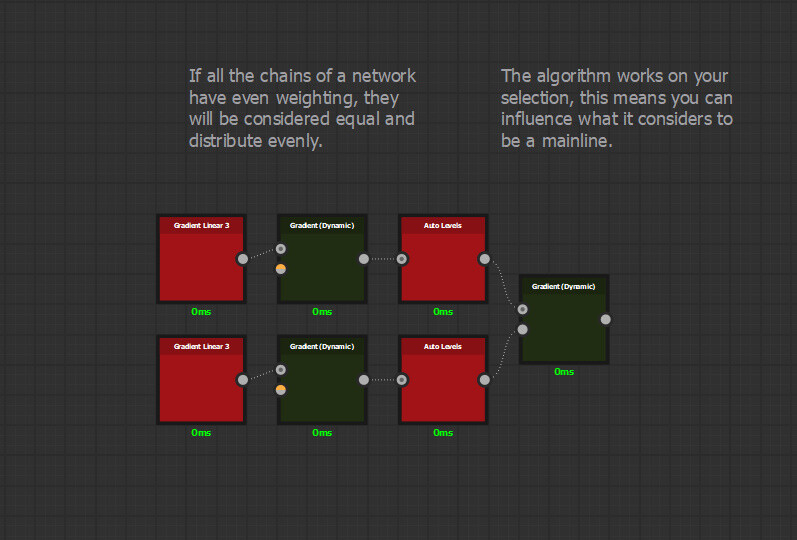

If it thinks your network is equally important, it will simply place them evenly.

This makes it possible to influence the layout based on your selection.

Other nodes are inserted into the mainline relative to their input position.

The tool will generally favor the middle node chain if possible however.

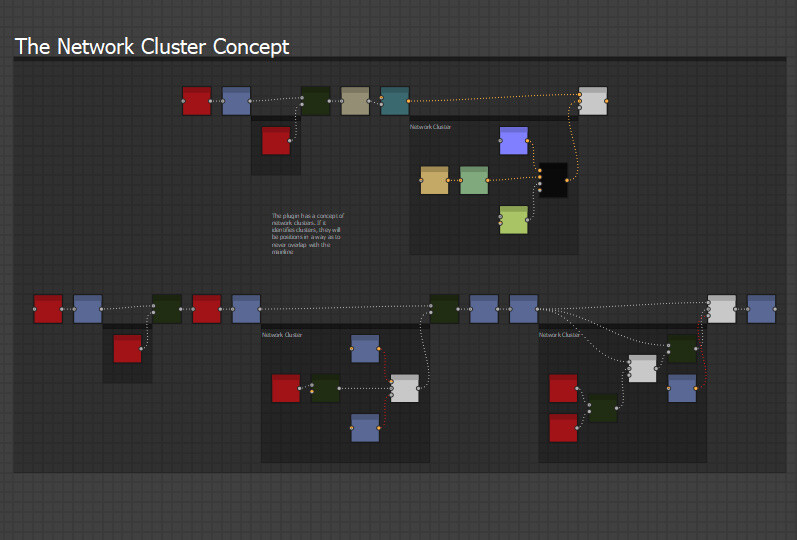

The Network Cluster Concept

Group of related nodes will form network clusters and these are what feed into the mainline. They will also be given space and positioned such that they never overlap with the mainline. The darker frames below are network clusters.

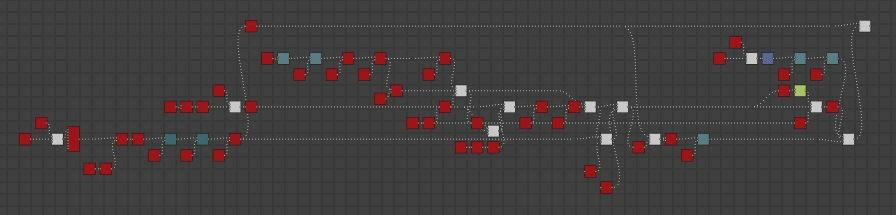

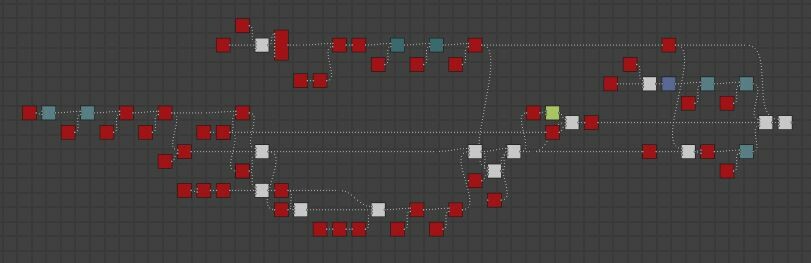

Looping Networks

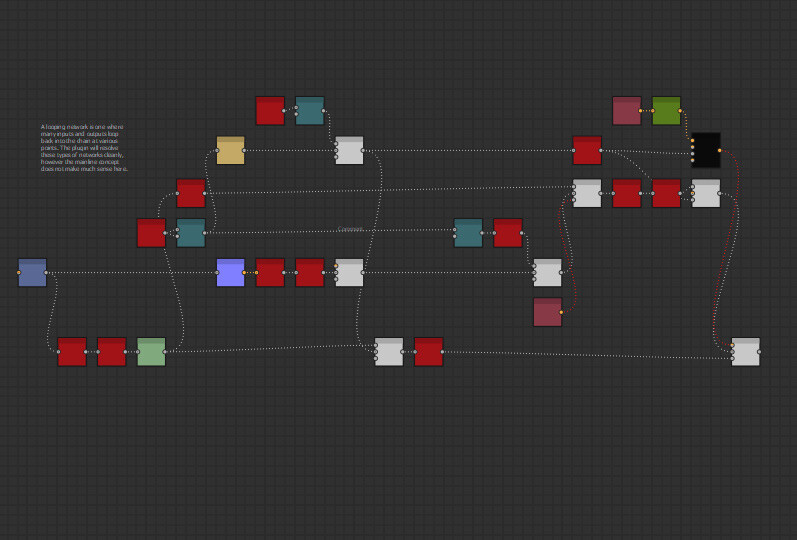

Nodes that loop back into the network at various points form looping networks. These are often very complicated and can sometimes stretch the entire length of the graph.

While the tool will successfully handle these types of networks, it is often better layout your graph more contextually, using the tool as an aid to speed up the process. Taking the network above, we run the tool on smaller network clusters instead (shown in the darker frames) and position them more contextually and to fit your personal style.

Settings

Hotkey

You can define your hotkey here. Requires a Substance Designer restart

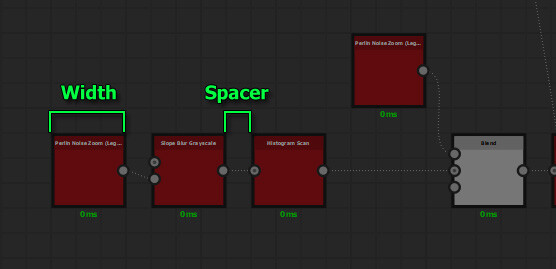

Node Width

Sets the width of each node, generally you can leave this untouched.

Spacer

Sets the distance between each node

Selection Count Warning

The plugin processing time is exponential, meaning large selections can take a very long time to compute. A warning is displayed before running the plugin if the number of selected nodes surpasses this threshold.

Consider Splits Nodes For Mainline

There are two styles for laying out the network. If Consider Split Nodes For Mainline is on, the algorithm will reason on split nodes first, generally preferring to use them as mainlines. This results visually larger encasing loops.

If Consider Split Nodes For Mainline is off, split nodes are given the same priority as everything else. The visual result here are more defined node clusters and grouping. Unless there are a lot of complicated looping networks in your selection, there may be no difference between the settings.

================================================

================================================

Straighten Connection

This tool will create dot nodes out of a given node to each of its connected outputs. Which helps reduce visual clutter and readability.

Dot nodes will be chained together.

Works on multiple selection too, handy to clean up the entire graph at the end of a working session.

There is a tool to remove dot nodes connected to your selected node too. Found in the toolbar

Settings

Straighten Selected Hotkey

You can define your hotkey here. Requires a Substance Designer restart

Remove Connected Dot Nodes Hotkey

You can define your hotkey here. Requires a Substance Designer restart

Distance From Input

Defines how far from the input of each node to place the dot node

================================================

================================================

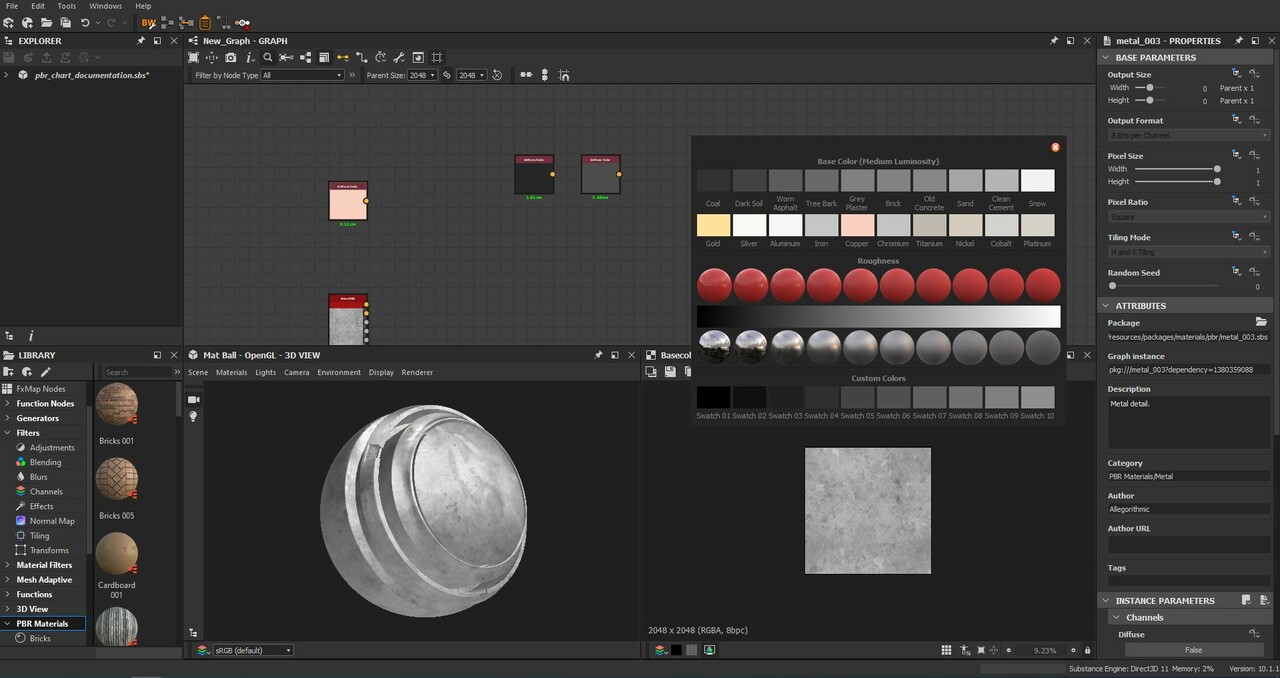

PBR Color Chart

This is a convenient PBR color chart built directly into Designer. It provides various pbr values, based on DONTNOD, to quickly and easily reference without the need to having a color chart opened in windows. https://seblagarde.wordpress.com/2014/04/14/dontnod-physically-based-rendering-chart-for-unreal-engine-4/

It is always displayed ontop of the designer viewport, making it easy to color pick from

Swatches are select-able, drag-able and hide the UI to allow for easy comparison with your texture. Simply click and drag over the 2d view to compare values directly.

Up to 10 custom colors are supported. You can edit the colors and name as needed

================================================

================================================

Optimize Graph

This tool can be used to optimize various parts of your graph.

Remove Duplicate

Composite Nodes - Evaluate Input Chain

If this is on, the plugin will identify chains of nodes which are identical and remove them too

The plugin has some rules to what it regards a duplicate.

Settings must be identical for a node to be considered a duplicate

A node with an exposed parameter is not considered a duplicate

Uniform Color Nodes - Output Size

Will reduce all selected color nodes to 16x16, the optimal output size for a node in designer. Connected outputs are automatically set to relevant to parent

Blend Nodes - Ignore Alpha

Any selected blend node which only have grayscale inputs will have its alpha blending mode set to ignore alpha.

Note: This setting requires a recompute of the graph, so is disabled by default.